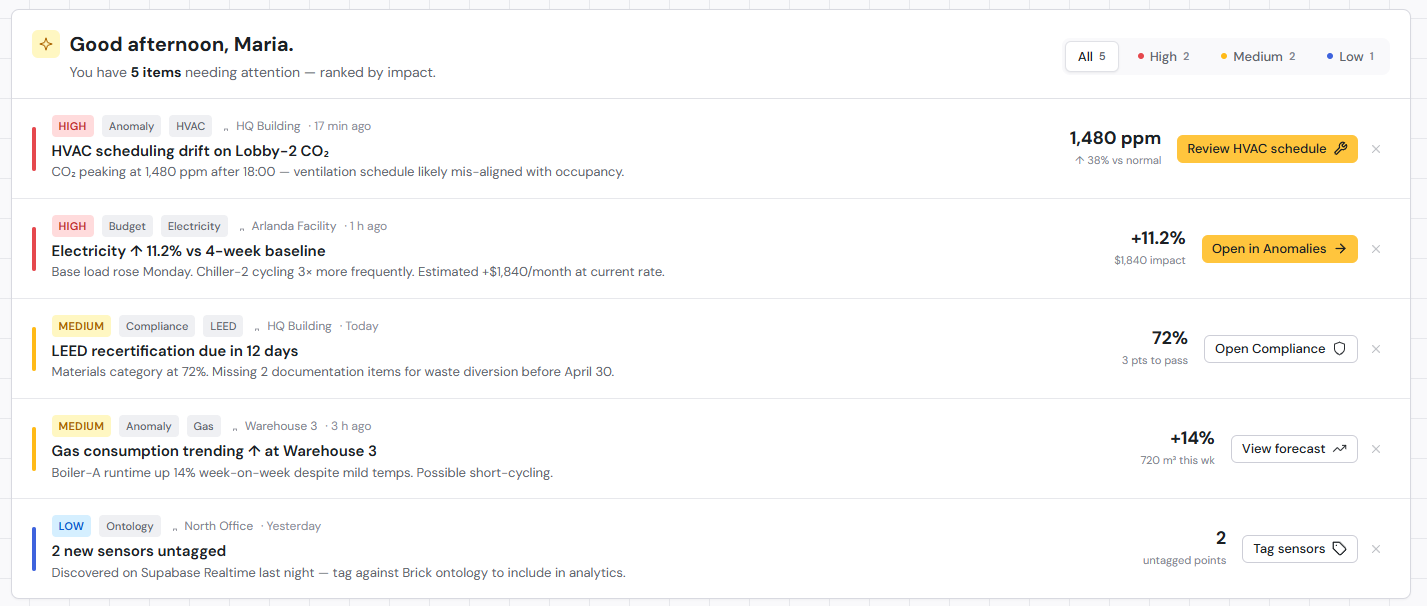

The Tuesday afternoon energy spike. The chiller that fails on a Friday night. The comfort complaint that lands the day after the auditor leaves. By the time these show up on a monitoring dashboard, they are already problems.

That is the limit of monitoring. It tells you what just happened. It cannot tell you what is about to.

Forecasting changes the question. Instead of asking what the building is doing right now, it asks what the building is going to do tomorrow, next week, or during the next heatwave. The answer is what lets a facilities team get ahead of a problem instead of explaining it after the fact.

For buildings with hundreds or thousands of sensors, that shift matters. The data is already there. The question is whether anything is doing the forward-looking work with it.

Why forecasting matters more than monitoring

A modern BMS already produces a flood of real-time numbers. Temperatures every few seconds, energy at granular intervals, equipment status, occupancy signals. Most of that data lives a short life. It triggers an alarm or it gets written to a database. Either way, the value of any single reading drops sharply within a few minutes of it arriving.

Forecasting extends the useful life of the data. Yesterday’s CO2 readings, last month’s HVAC runtimes, and three years of energy meter history become inputs to a prediction about tomorrow. The numbers that used to be archive material start doing work again.

The operational difference shows up in how teams spend their time. A reactive operation responds to events. A predictive one schedules around them. The hours that used to go into emergency callouts and after-the-fact root cause analysis shift toward planning, preparation, and the kind of low-stress maintenance that costs less and breaks less.

What sensor forecasting actually predicts

Sensor forecasting is not a single output. It is a layered set of predictions, each tied to a different operational question.

Energy demand. Short-horizon forecasts (the next 24 hours) inform day-ahead market participation, demand response participation, and peak-charge avoidance. Medium-horizon forecasts (the next 7 days) feed maintenance scheduling, staffing, and tariff management. Seasonal forecasts support budget planning and certification reporting.

Temperature and comfort trajectories. A zone is currently at 21°C, but where will it be in three hours under the current setpoints and the incoming weather? Comfort trajectories expose buildings that look fine on the dashboard while drifting somewhere occupants will notice.

Equipment degradation curves. Sensors produce signatures that change as equipment wears. Vibration patterns shift. Power draw climbs for the same output. Pump efficiency drops a percentage point a month. Forecasting these curves lets a maintenance team intervene before a failure window opens.

Peak load timing. Knowing the magnitude of a peak is not the same as knowing the half-hour interval it will hit. Predicting the timing is what makes load shifting and battery dispatch worthwhile.

These forecasts are not independent of each other. They are connected, because the building is. A heatwave shifts comfort trajectories, which shifts HVAC load, which shifts peak timing, which stresses equipment differently than a mild week would. A forecasting system that handles them in isolation misses the interaction effects that matter most.

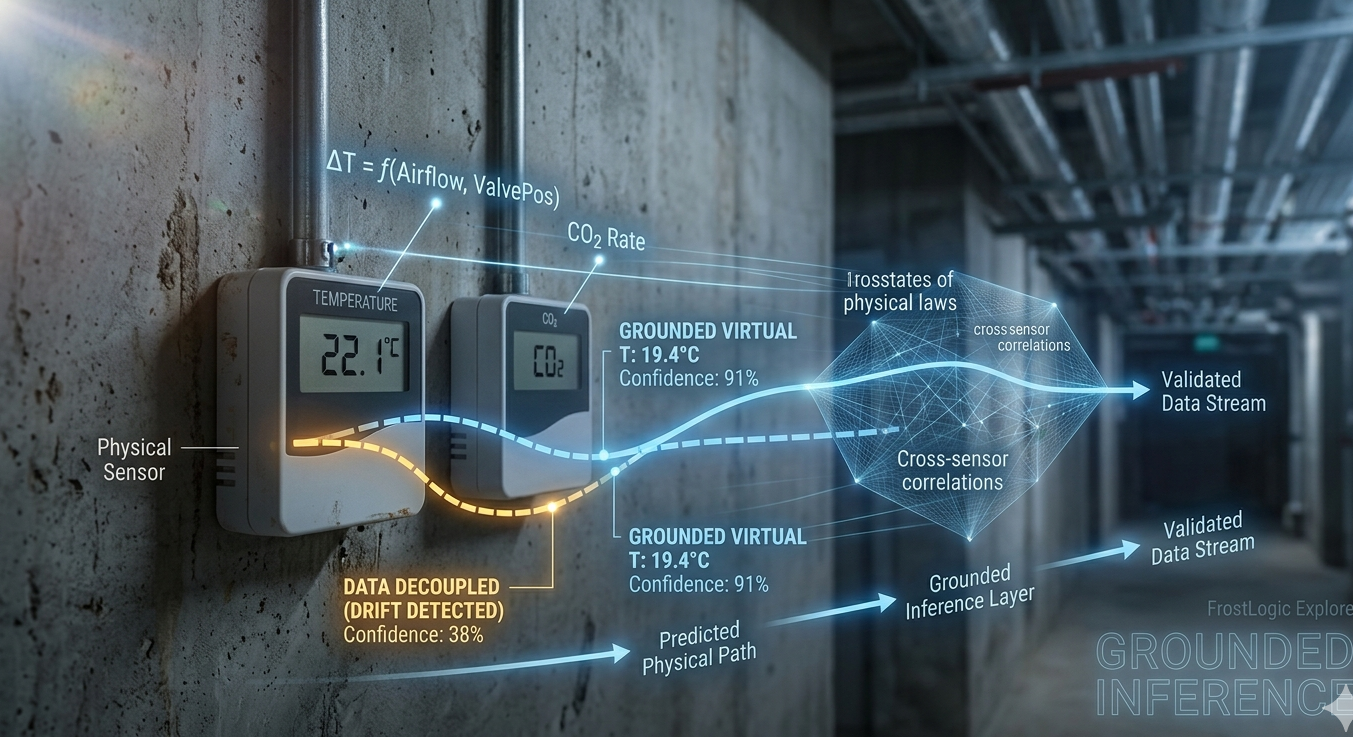

How it works: from time-series data to confidence-bounded predictions

A forecast is a model’s best estimate of future sensor values, conditioned on what it has seen before and what it knows about the building.

The inputs are time-series data: temperatures, energy meters, airflow, occupancy proxies, CO2, equipment runtimes. Layered on top of that is the context that makes the data meaningful: outdoor weather forecasts, calendar patterns, scheduled events, building occupancy schedules, tariff structures.

The model learns the relationships between these inputs and the values it is trying to predict. Some patterns are easy. Outdoor temperature drives heating load in winter. Friday afternoon occupancy drops earlier than Tuesday. Other patterns are harder. The interaction between a setpoint change at 6 AM and the energy consumption at 2 PM depends on building thermal mass, weather, internal loads, and equipment behavior over the intervening hours.

What separates a useful forecast from a number on a screen is what the model says about its own uncertainty.

A single-line prediction (“energy demand at 3 PM will be 412 kW”) is rarely the most useful output. A confidence-bounded prediction is. “Energy demand at 3 PM will be between 395 and 430 kW with 90% confidence” tells an operator how much room they have to plan. If the band is narrow, the forecast can drive a tight schedule. If it is wide, the operator knows to keep margin and check the inputs.

FrostLogic Explore runs forecasts across multiple horizons (hours, days, weeks, seasons), each with explicit confidence bounds. The horizons are not independent runs of the same model. They are nested forecasts that share information, so a surprising short-term result updates the longer-term outlook automatically.

Conceptually, the system reads as: historical time series, plus external context, plus the physical relationships the building obeys, all feeding a probabilistic forecast over a chosen horizon. The bounds widen as uncertainty grows further into the future.

Real-world applications

The value of forecasting shows up in what changes operationally. Three examples.

Pre-cooling for an expected heat spike. Wednesday’s forecast shows outdoor temperatures climbing 6°C above the weekly average, with peak grid pricing arriving in the afternoon. Without forecasting, the building reacts. Cooling demand surges into peak pricing, comfort drifts during the worst hours, and the energy bill takes the hit. With forecasting, the chiller starts earlier on cheap overnight power, the building’s thermal mass absorbs the load, and afternoon demand stays inside target. The savings come from acting on a future the building already saw coming.

Maintenance scheduled before a predicted failure window. AHU-4 has been running fine for months. The vibration profile starts shifting in a way the forecast model recognizes from previous bearing failures across the portfolio. The system flags a probable failure window two to four weeks out, with widening confidence bounds. The maintenance team books the work for week three, before any operational impact, instead of dispatching an emergency crew on a Saturday night.

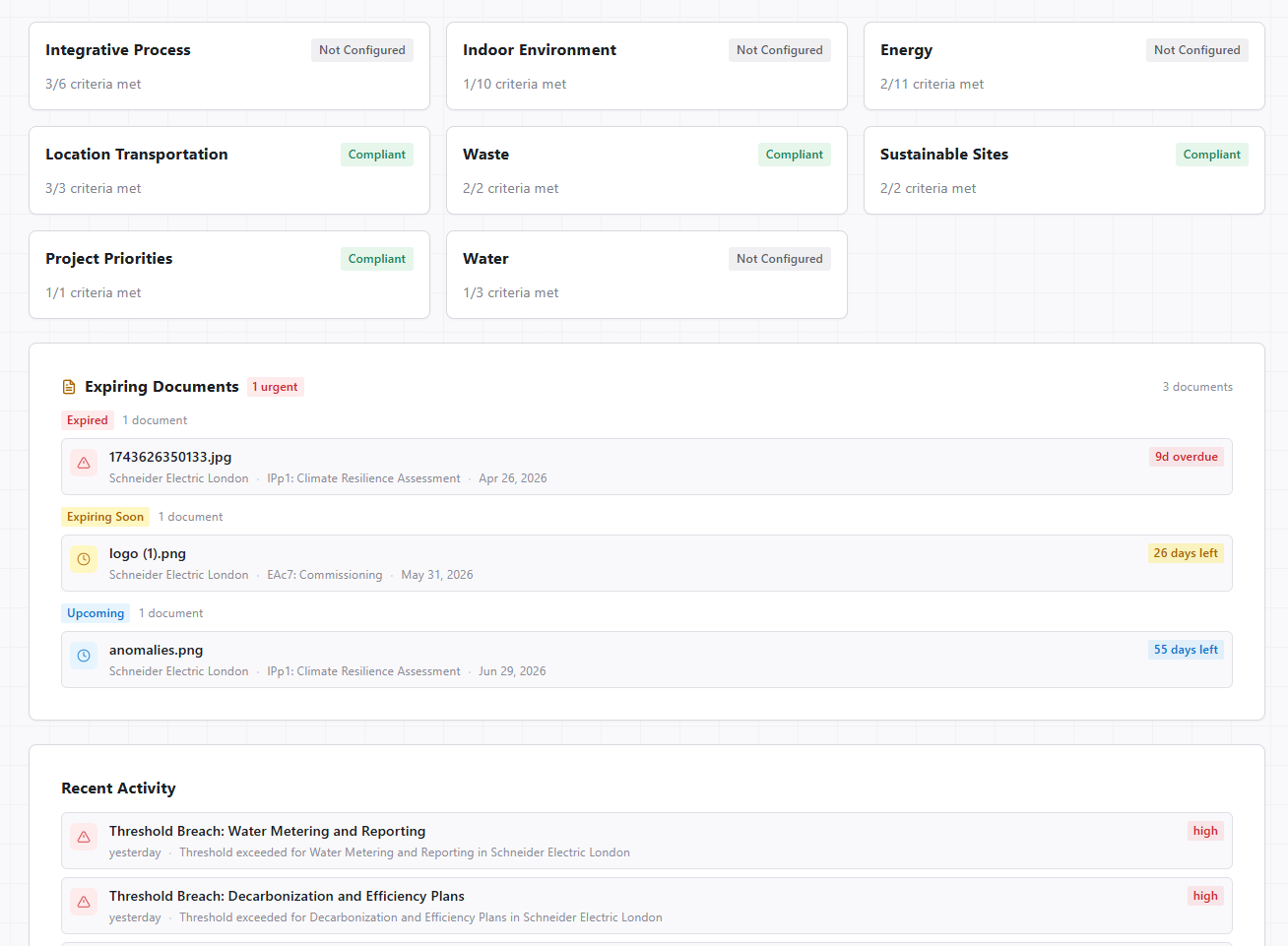

Proving a certification threshold will not be breached. An auditor wants evidence that the building will stay inside a thermal comfort certification band for the next quarter. A monitoring system can show historical compliance. A forecast can show projected compliance under expected conditions, with the confidence band quantifying how much weather variation the building can absorb before drifting out of range.

Each of these uses the same underlying forecasts. The operational value comes from the application layer that turns a prediction into a plan.

What-if simulation: forecasting meets scenario planning

A forecast says what will happen if conditions follow their expected path. A scenario asks what would happen if they did not, or if the building itself was operated differently.

This is where forecasting becomes a planning tool, not just an early warning system. Take a question like: what happens to weekly energy costs if we raise the cooling setpoint by 1°C during occupied hours? A pure forecast cannot answer that. The setpoint change is a counterfactual. It is asking the model to predict an outcome under conditions the building has not been running under.

Causal models go past the correlations in the historical data. They encode how the building actually responds to changes. A setpoint adjustment reduces compressor runtime, which lowers electricity demand, which interacts with peak pricing, which feeds back into total cost. The relationships are physical, not just statistical, so the model can extrapolate to a setpoint the building has not seen before in the same conditions.

Explore’s causal intelligence layer lets operators run these simulations on top of the forecast. You can ask: what if we delayed the morning ramp-up by 30 minutes? What if outdoor temperatures run 2°C above the seasonal average? What if AHU-3 is taken offline for service the week of June 12? Each answer arrives with the same confidence bounds the underlying forecast carries, so the planning has a measure of its own uncertainty built in.

This matters most for decisions that cannot be tested live. Lowering a setpoint to see what happens is fine in a lab. In a building with tenants, it is a customer-experience risk. Simulating the outcome first turns a guess into a defended decision. For the broader context of how predictive intelligence layers on top of standard BMS data, see our piece on building insights.

Getting started with sensor forecasting

The practical requirements for forecasting in a building are smaller than they sound.

Data history. Twelve months of sensor data is a useful baseline. It captures seasonality, weekday and weekend patterns, and at least one full cooling and heating cycle. Buildings with less history can still get short-horizon forecasts immediately. The seasonal layer just takes longer to mature.

Sensor coverage. Energy meters, indoor temperatures, outdoor weather feeds, and equipment runtime signals are the minimum useful set. CO2 and humidity add comfort dimensions. Vibration and current-draw measurements add predictive maintenance value. Forecasts adapt to the sensors a building actually has, not the ones a vendor wishes were there.

BMS integration. Explore reads from existing BMS infrastructure through standard protocols (BACnet, Modbus, OPC UA) and modern APIs. The forecasting workload runs in the cloud, while the BMS continues to handle real-time control. For buildings with on-premise constraints or specific data residency requirements, edge deployments are an option for sensitive workloads.

Operational handoff. A forecast that nobody acts on is dashboard wallpaper. The first month of deployment usually focuses on calibrating which forecasts the facilities team will rely on, what alert thresholds make sense, and how the predictions flow into the daily routine. Forecasts get used when they are tied to a decision someone is already trying to make.

Forecasting is not a replacement for monitoring. It sits on top of it. The building still needs reliable sensors, clean data pipelines, and operators who know the systems. What forecasting adds is time. Time to act before a heatwave instead of during it. Time to schedule maintenance instead of responding to a breakdown. Time to defend a compliance number with projection, instead of explaining a deviation after the inspection.

For technical buyers evaluating tools, the questions worth asking are the boring ones. How wide are the confidence bands on a typical 7-day forecast for a building like ours? How does the model handle sensor outages mid-forecast? Can it explain why a particular forecast moved between yesterday and today? Can it run scenarios that vary meaningfully from current conditions? The marketing layer answers the first question. The other three answer whether the system can be trusted with a real operational decision.

FrostLogic Explore brings sensor forecasting, scenario simulation, and confidence-bounded predictions to commercial and industrial buildings. Learn more about Sensor Intelligence or request a demo.